Hi,

I am doing a uni assignment and have a few questions.

I made an AC generator using a magnet and a crank shaft to pusht the magnet in and out of a coil.

Using my multimeter, I get a voltage of 1.5V AC roughly (varies between 1 and 2 volts). I wanted to power a mini light bulb with this circuit or preferably magnetize a nail using the voltage I get out.

I hooked the terminals of my generator to a multiplier circuit which then gave ~10V DC on the output terminals of my multiplier circuit. How can I use this voltage to power a lightbulb?

When I connect the lightbulb, the voltage over the lightbulb goes to zero.

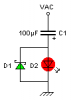

This is my circuit:

**broken link removed**

The resistor circled is the lightbulb.

The capacitors are low leakage capacitors I bought at a store. The problem is as soon as the lightbulb is connected, the voltage sinks to zero.

If I put a 10k resistor, I can measure the full 10V drop over the resistor. However, if I put the lightbulb in series or on its own, the voltage sinks to zero.

I can't understand it???? If I put a 100ohm resistor where I have marked on my circuit, the voltage also sinks to zero. With 1k I get roughly the full voltage drop.

Does it have something to do with the impedance of the circuit? Please help me light a lightbulb!

Thanks so much!

I am doing a uni assignment and have a few questions.

I made an AC generator using a magnet and a crank shaft to pusht the magnet in and out of a coil.

Using my multimeter, I get a voltage of 1.5V AC roughly (varies between 1 and 2 volts). I wanted to power a mini light bulb with this circuit or preferably magnetize a nail using the voltage I get out.

I hooked the terminals of my generator to a multiplier circuit which then gave ~10V DC on the output terminals of my multiplier circuit. How can I use this voltage to power a lightbulb?

When I connect the lightbulb, the voltage over the lightbulb goes to zero.

This is my circuit:

**broken link removed**

The resistor circled is the lightbulb.

The capacitors are low leakage capacitors I bought at a store. The problem is as soon as the lightbulb is connected, the voltage sinks to zero.

If I put a 10k resistor, I can measure the full 10V drop over the resistor. However, if I put the lightbulb in series or on its own, the voltage sinks to zero.

I can't understand it???? If I put a 100ohm resistor where I have marked on my circuit, the voltage also sinks to zero. With 1k I get roughly the full voltage drop.

Does it have something to do with the impedance of the circuit? Please help me light a lightbulb!

Thanks so much!