Hey guys,

can anyone please explain to me how to read and understand pull-up/down resistors inside the ecm schematics?

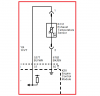

see the image...I marked the pull-up resistor and I would like to know how it works....

how does pull up/down works?

what is the triangle sign in the pull up stand for?

**broken link removed**

Thanks

can anyone please explain to me how to read and understand pull-up/down resistors inside the ecm schematics?

see the image...I marked the pull-up resistor and I would like to know how it works....

how does pull up/down works?

what is the triangle sign in the pull up stand for?

**broken link removed**

Thanks