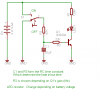

Hi, I plan to make a handheld 6 LED flashlight. I have this idea to 'sophisticate' the switching on and off a bit by making the LEDs fade on for 1 sec when switched on then hold its brightness then fades off for 1 sec when its switched off.

I need help for the simplest circuit to achieve the fade effect. I'll be constructed into a usual sized flashlight.

Im a novice on electronics so please be very instructive especially in mentioning the component values.

Thanks in advance!

I need help for the simplest circuit to achieve the fade effect. I'll be constructed into a usual sized flashlight.

Im a novice on electronics so please be very instructive especially in mentioning the component values.

Thanks in advance!